A100 Liquid Cooled accelerates workloads big and small. Whether using MIG to partition an A100 GPU into smaller instances, or NVLink to connect multiple GPUs to accelerate large-scale workloads, the A100 easily handles different-sized application's needs, from the smallest job to the biggest multi-node workload.

| Brand | NVIDIA |

| Model Number | A100 |

| Availability | In stock |

| Product SKU | NVA100TCGPU80L-KIT |

| Minimum order quantity | 1 |

| Maximum order quantity | |

| Listed date | 6th Nov, 2023 |

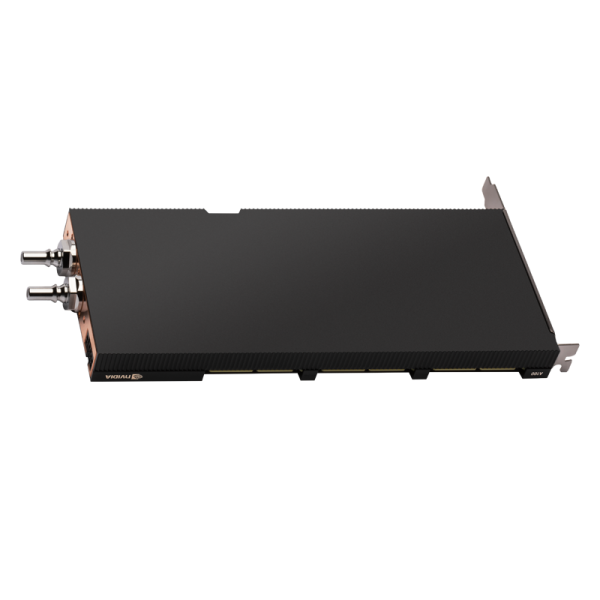

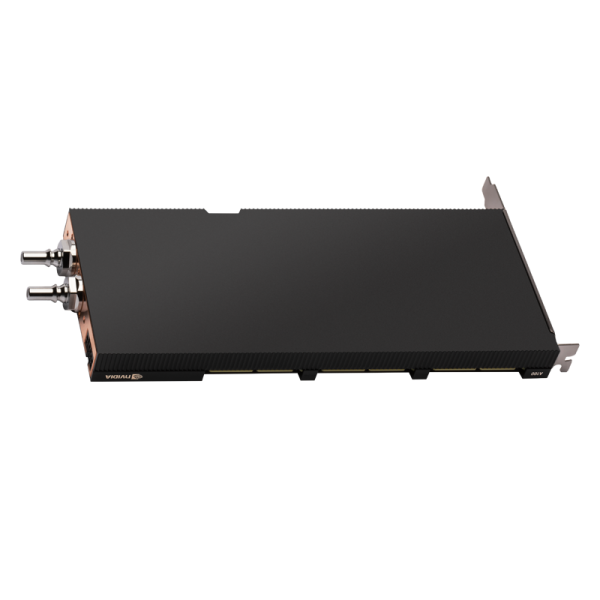

Product | NVIDIA A100 Liquid Cooled PCIe |

|---|---|

Architecture | Ampere |

Process Size | 7nm | TSMC |

Transistors | 54 Billion |

Die Size | 826 mm2 |

CUDA Cores | 6912 |

Streaming Multiprocessors | 108 |

Tensor Cores | Gen 3 | 432 |

Multi-Instance GPU (MIG) Support | Yes, up to seven instances per GPU |

FP64 | 9.7 TFLOPS |

Peak FP64 Tensor Core | 156 TFLOPS | 312 TFLOPS Sparsity |

Peak FP32 | 19.5 TFLOPS |

TF32 Tensor Core | 156 TFLOPS | 312 TFLOPS Sparsity |

Peak FP16 Tensor Core | 312 TFLOPS | 624 TFLOPS Sparsity |

Peak INT8 Tensor Core | 624 TOPS | 1248 TOPS Sparsity |

INT4 Tensor Core | 1248 TOPS | 2496 TOPS Sparsity |

NVLink | 2-way | Standard or Wide Slot Spacing |

NVLink Interconnect | 600 GB/s Bidirectional |

GPU Memory | 80 GB HBM2e |

Memory Interface | 5120-bit |

Memory Bandwidth | 1555 GB/s |

System Interface | PCIe 4.0 x16 |

Thermal Solution | Liquid Cooled |

vGPU Support | NVIDIA AI Enterprise |

Power Connector | PCIe 16-pin |

Total Board Power | 300 W |

No review found.

Great Value

Secured Shopping

Worldwide Delivery

24/7 Support

Easy Payment

Portable Shopping

Subscribe our newsletter for coupon, offer and exciting promotional discount.